Safety in Autonomous Driving explained in a nutshell (A-Z) – Today starting with “A” like ASIL

By Ole Harms

Throughout the coming months I will regularly share quick glimpses on safety-related issues that matter while bringing Autonomous Driving (AD) onto the streets. I believe transparency is key here, and I want to contribute a bit by providing some short and easy-to-digest explanations.

I will try to cover as much letters of the alphabet as possible, not necessarily in alphabetical order though. But today, I start at the very beginning – the “A”. Here, ASIL came to my mind first and I briefly put together what I thought might be of interest to a broader audience without going too much into details.

ASIL is a risk classification scheme provided by an ISO standard (ISO 26262 more precisely, if you want to dig deeper). The acronym stands for Automotive Safety Integrity Level. The different levels – A, B, C, D – as well as the additional QM level are the result of a hazard and risk analysis (HARA) and trigger the definition of safety requirements – so called “safety goals” – for automotive functions and components, implemented both in software and hardware.

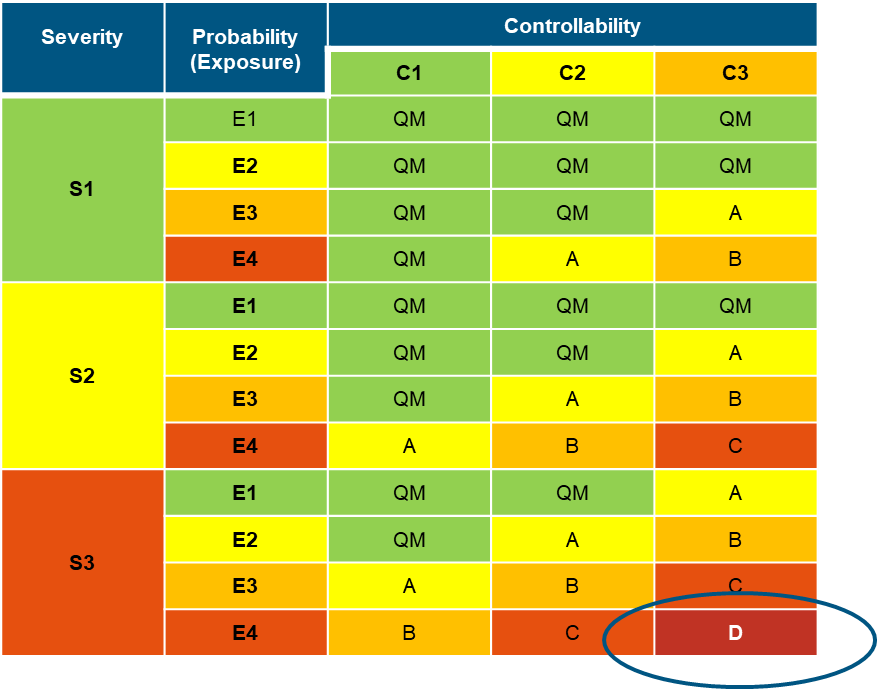

These safety goals are based upon an analysis of three factors in combination:

- Severity (S) of the potential physical harm to passengers

- Exposure (E) as the probability of occurrence of a driving situation, and

- Controllability (C) of the malfunction during the driving situation

The higher the rating, the worse the impact (e.g., S3 = life threatening / fatal injuries or E4 = highly probable occurrence). Context (e.g., region specific weather conditions, traffic density) and interpretation are always included while determining the rating.

Without going into too much detail here it is obvious that a combination of S3, E4 and C3 describes a highly hazardous, life-threatening situation. For this situation the evaluated function is then rated with an “ASIL D” which implies that rigid, powerful safety measures must be applied.

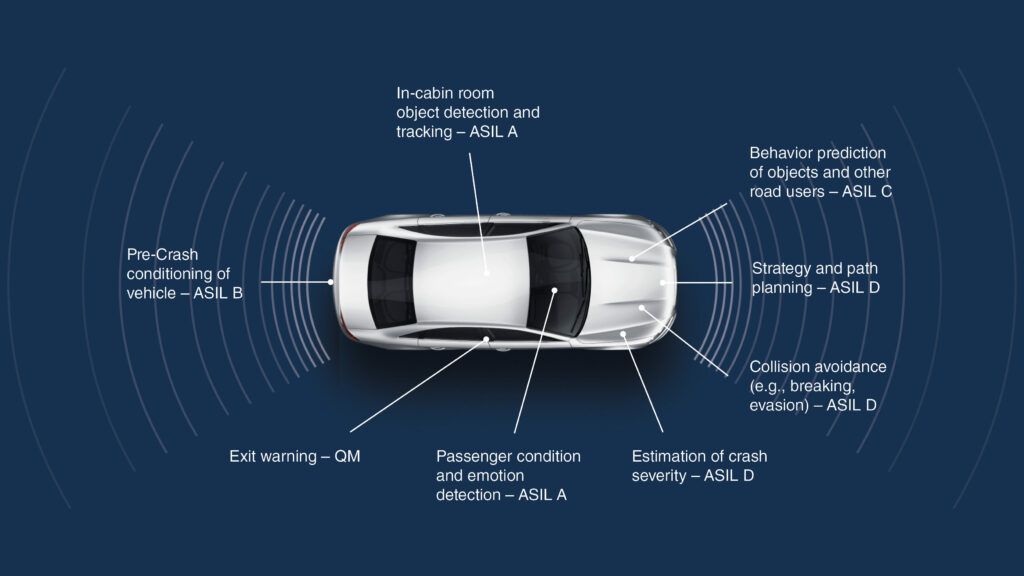

It surely comes with no surprise that lots of software and hardware modules that enable autonomous driving are exposed to potential situations that lead to an “ASIL D” rating. This is especially true for forward-facing functions, see image 2.

Because of this, above mentioned “safety goals” determine each and every step of our development process – from defining, specifying, validating, developing to verifying a safety function. Just as an example: One very important property is the failure rate, which in case of an ASIL D requirement has to be less than 1 FIT (Failure In Time), which equals 1 failure in 10 million hours or 0,00000001 failures per hour.

To just give you a flavor how these “safety goals” finally translate into concrete measures let us look at the example of the ‘Perception’: The Perception module generates an understanding of the outside world and its interaction with the vehicle based on information provided by different sensors, be it certain cameras, Radar or Lidar sensors. If a camera failure is detected while driving, the perception module still can rely e.g. on radar sensors – which detect static or moving objects – to bring the vehicle into the safe state again.

So, meeting safety goals in AD functions is often achieved through redundancy (e.g. multiple sensors, diverse ways of data processing) at various levels to ensure the failure of one system does not affect the overall operation of the vehicle.